Episodic Semantic Scene Analysis

Contact Persons

Federico Tombari, Helisa Dhamo, Azade Farshad

Abstract

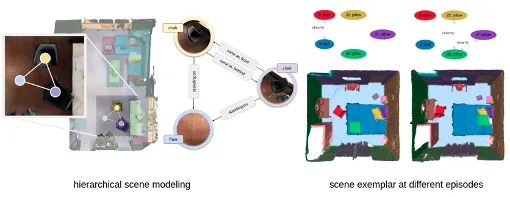

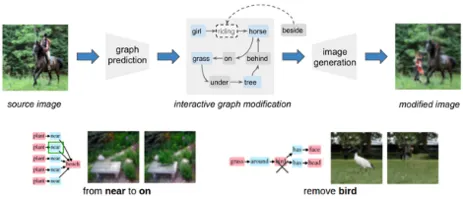

The goal of this project is to develop a scalable, hierarchical scene modeling framework so that a machine is able to understand the surrounding environment and interact with it in a meaningful way across episodes. We are developing principled approaches to exploit object permanence and prior knowledge of the environment to allow real-time and long-term operation of a machine in real time and with high robustness. We create tools for the community and demonstrate those tools through applications in augmented reality and robotics. We rely on graph representations, known as scene graphs, to describe such hierarchy of the scene. The nodes of the graph describe objects, while the edges are relationships between the objects.

Keywords: Scene Understanding, Scene Graphs, Robotic Perception, Augmented Reality

Additional Information

By understanding the object constellations in the scene, we have shown that it is possible to perform manipulation at an abstract semantic level. The field of robotics can also benefit from the capability of the method to reason and manipulate a scene graph, e.g. a robot tasked to tidy up a room can — prior to acting — manipulate the scene graph of the perceived scene by moving objects to their designated spaces, changing their relationships and attributes: “clothes lying on the floor” to “folded clothes on a shelf”, to obtain a realistic future view of the room.

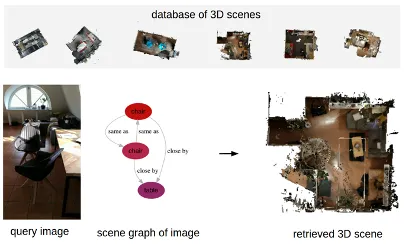

Additionally, we have demonstrated that this abstract representation of the scene is suitable for domain-agnostic retrieval, e.g. to localize a robot agent. In this application, we have recorded a database of reconstructed 3D environments, and we want to know the current location of the agent, using an instant photo. Since the photo is taken at a later episode from the 3D scenes, different illumination conditions and object arrangements are present, due to human activity. Our abstract, hierarchical scenes are robust with respect to these changes.

Research Partner

Prof. Gregory Hager