Adversarial Attacks on Graph Neural Networks via Meta Learning

This page is about our paper "Adversarial Attacks on Graph Neural Networks via Meta Learning" by Daniel Zügner and Stephan Günnemann, published at the International Conference on Learning Representations (ICLR) 2019.

[Paper | Github | Poster | BibTeX]

Please cite our paper if you use our code, results, model, or poster.

Abstract

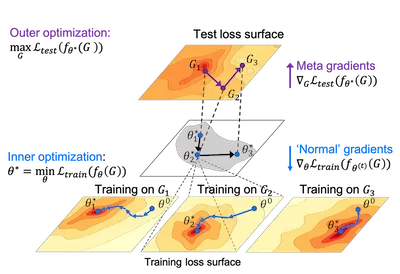

Deep learning models for graphs have advanced the state of the art on many tasks. Despite their recent success, little is known about their robustness. We investigate training time attacks on graph neural networks for node classification that perturb the discrete graph structure. Our core principle is to use meta-gradients to solve the bilevel problem underlying training-time attacks, essentially treating the graph as a hyperparameter to optimize. Our experiments show that small graph perturbations consistently lead to a strong decrease in performance for graph convolutional networks, and even transfer to unsupervised embeddings. Remarkably, the perturbations created by our algorithm misguide the graph neural networks such that they perform worse than a simple baseline that ignores all relational information. Our attacks do not assume any knowledge about or access to the target classifiers.