Revisiting Robustness in Graph Machine Learning

This page links to additional material for our paper

Revisiting Robustness in Graph Machine Learning

Lukas Gosch, Daniel Sturm, Simon Geisler, Stephan Günnemann

International Conference on Learning Representations (ICLR), 2023

Links

Abstract

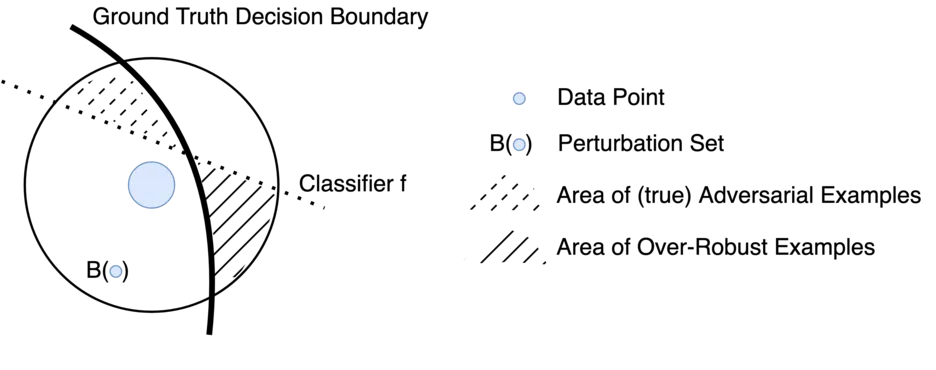

Many works show that node-level predictions of Graph Neural Networks (GNNs) are unrobust to small, often termed adversarial, changes to the graph structure. However, because manual inspection of a graph is difficult, it is unclear if the studied perturbations always preserve a core assumption of adversarial examples: that of unchanged semantic content. To address this problem, we introduce a more principled notion of an adversarial graph, which is aware of semantic content change. Using Contextual Stochastic Block Models (CSBMs) and real-world graphs, our results uncover: i) for a majority of nodes the prevalent perturbation models include a large fraction of perturbed graphs violating the unchanged semantics assumption; ii) surprisingly, all assessed GNNs show over-robustness - that is robustness beyond the point of semantic change. We find this to be a complementary phenomenon to adversarial robustness related to the small degree of nodes and their class membership dependence on the neighbourhood structure.

Cite

You can cite our paper as follows:

@inproceedings{gosch2023revisiting,

title = {Revisiting Robustness in Graph Machine Learning},

author = {Gosch, Lukas and Sturm, Daniel and Geisler, Simon and G{\"u}nnemann, Stephan},

booktitle={International Conference on Learning Representations (ICLR)},

year = {2023}

}