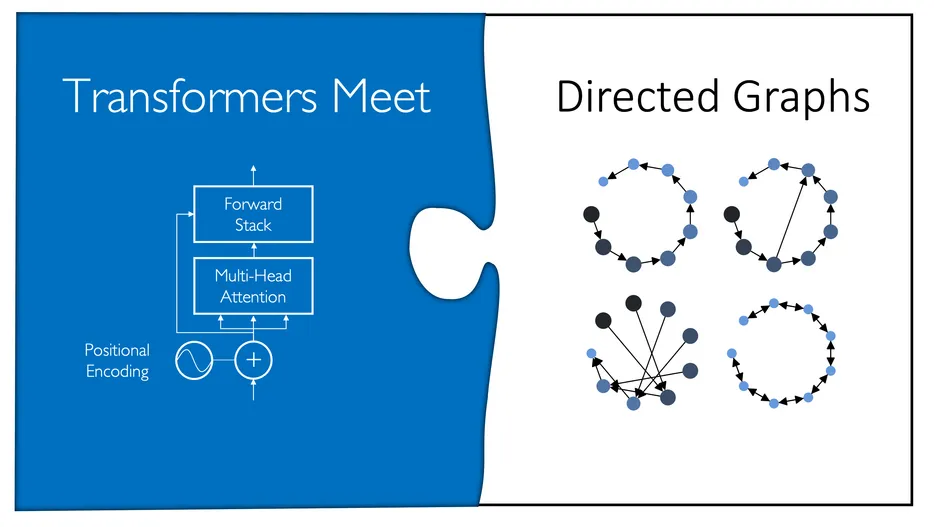

Transformers Meet Directed Graphs

Abstract

Transformers were originally proposed as a sequence-to-sequence model for text but have become vital for a wide range of modalities, including images, audio, video, and undirected graphs. However, transformers for directed graphs are a surprisingly underexplored topic, despite their applicability to ubiquitous domains including source code and logic circuits.

In this work, we propose two direction- and structure-aware positional encodings for directed graphs: (1) the eigenvectors of the Magnetic Laplacian — a direction-aware generalization of the combinatorial Laplacian; (2) directional random walk encodings. Empirically, we show that the extra directionality information is useful in various downstream tasks, including correctness testing of sorting networks and source code understanding. Together with a data-flow-centric graph construction, our model outperforms the prior state of the art on the Open Graph Benchmark Code2 relatively by 14.7%.

Links

[Paper | GitHub | YouTub (short) | YouTube (long)]

Resources

- Pretrained models with Magnetic Laplacian positional encodings on OGB Code2 (10 random seeds)

- Preprocessed OGB Code2 dataset following our graph construction (save at your chosen "data_root" in subfolder "ogbg-code2-norev-df")

Cite

Please cite our paper if you use the method in your own work:

@inproceedings{geisler2023_transformers_meet_directed_graphs,,

title = {Transformers Meet Directed Graphs},

author = {Geisler, Simon and Li, Yujia and Mankowitz, Daniel and Cemgil, Ali Taylan and G\"unnemann, Stephan and Paduraru, Cosmin},

booktitle={International Conference on Machine Learning, {ICML}}

year = {2023},

}