Adversarial Training for Graph Neural Networks: Pitfalls, Solutions, and New Directions

This page links to additional material for our paper

Adversarial Training for Graph Neural Networks: Pitfalls, Solutions, and New Directions

Lukas Gosch*, Simon Geisler*, Daniel Sturm*, Bertrand Charpentier, Daniel Zügner, Stephan Günnemann

NeurIPS, 2023

* Equal contribution

Links

Abstract

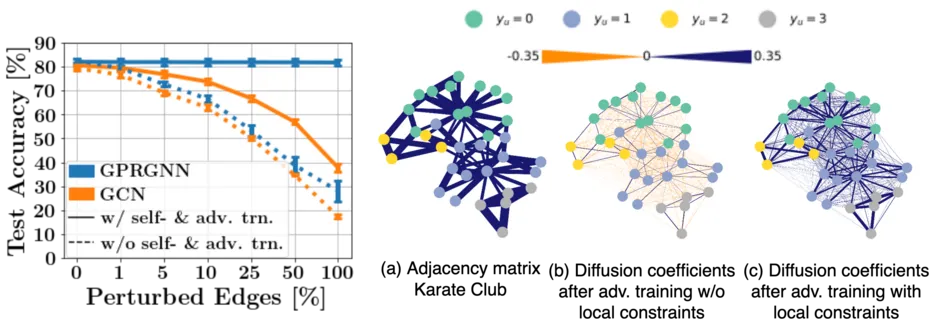

Despite its success in the image domain, adversarial training did not (yet) stand out as an effective defense for Graph Neural Networks (GNNs) against graph structure perturbations. In the pursuit of fixing adversarial training (1) we show and overcome fundamental theoretical as well as practical limitations of the adopted graph learning setting in prior work; (2) we reveal that more flexible GNNs based on learnable graph diffusion are able to adjust to adversarial perturbations, while the learned message passing scheme is naturally interpretable; (3) we introduce the first attack for structure perturbations that, while targeting multiple nodes at once, is capable of handling global (graph-level) as well as local (node-level) constraints. Including these contributions, we demonstrate that adversarial training is a state-of-the-art defense against adversarial structure perturbations.

Cite

You can cite our paper as follows:

@inproceedings{

gosch2023adversarial,

title={Adversarial Training for Graph Neural Networks: Pitfalls, Solutions, and New Directions},

author={Lukas Gosch and Simon Geisler and Daniel Sturm and Bertrand Charpentier and Daniel Z{\"u}gner and Stephan G{\"u}nnemann},

booktitle={Thirty-seventh Conference on Neural Information Processing Systems (NeurIPS)},

year={2023},

}